Behind the Magic

When Medical AI Gets the Wrong Answer

I like to say, “All this talk about AI in medicine just fills me with ennui. I hope I am retired before I really have to deal with it professionally and dead before I have to deal with it as a patient.” In reality, there is a lot in it that I find interesting, and there is pretty much no chance that I’ll get out of here (medicine or life) without dealing with it. Today’s article is by a student presently taking a class with me. It proves the tired old trope, “Teachers learn more from their students than they teach them.”

Adam Cifu

For years, opening a blocked coronary artery seemed like an obvious imperative, but the COURAGE Trial challenged that assumption. The ISCHEMIA Trial furthered the argument. The reversal is now settled, but these shifts don’t happen overnight. In the years immediately following COURAGE, a clinical decision support tool searching for evidence on stable angina would have surfaced more papers supporting stenting than opposing it. This is a structural problem that persists in how modern AI retrieval systems operate. That early window, where the new evidence exists but is outnumbered, is where today’s AI clinical reference tools are likely to fail.

A brief primer

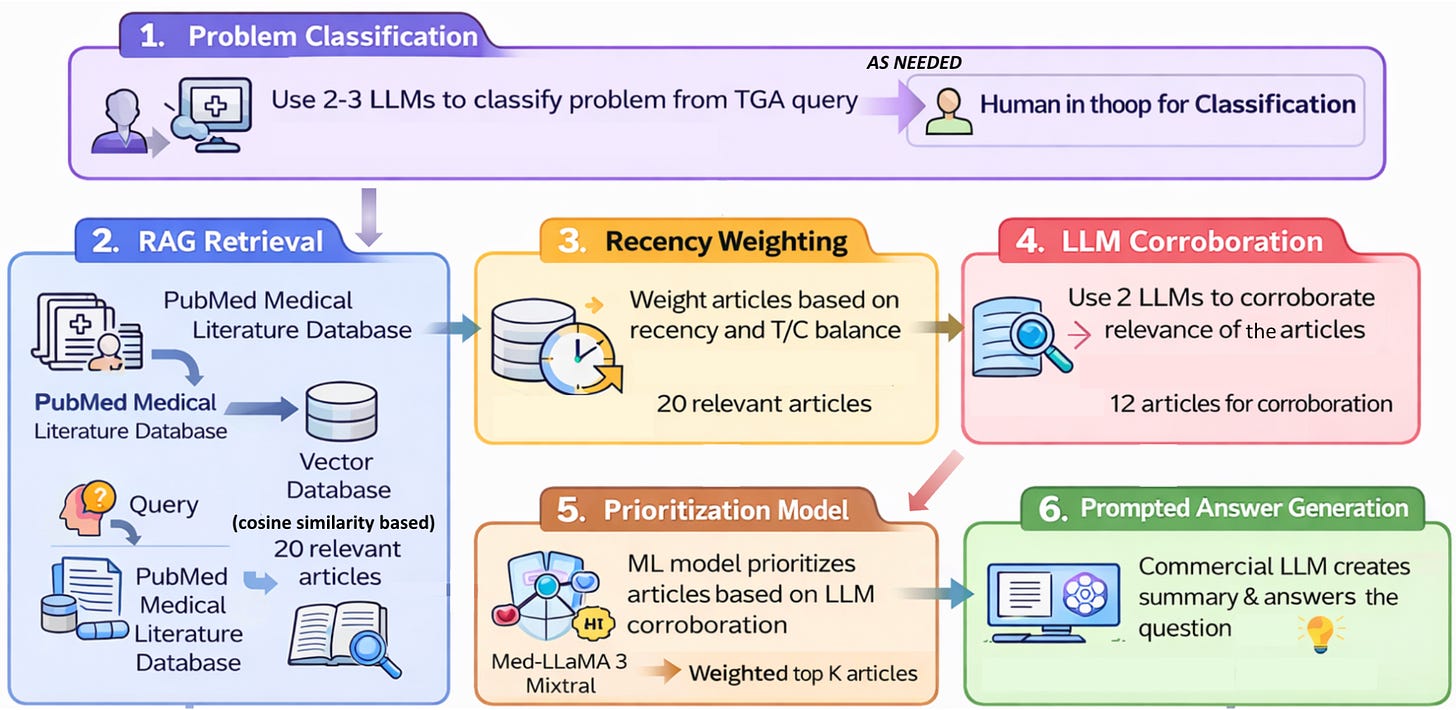

Most AI systems that answer clinical questions rely on a framework called Retrieval Augmented Generation (RAG) to ground responses in actual evidence. Instead of answering from memory alone (patterns captured during training), the model first searches a database of medical literature, retrieves the most relevant articles, and then synthesizes an answer from what it finds.

The “relevant” part is where these systems can display sub-standard deduction skills. Standard retrieval ranks articles using cosine similarity, which measures how closely the words and meanings of an article match those of the question. It is a measure of topical overlap, not of evidence quality, recency, or whether the article has since been contradicted. This is fine when the evidence base is stable and coherent, but becomes a problem when it isn’t.

The medical reversal connection

Readers of Sensible Medicine are likely familiar with medical reversal: the phenomenon in which an accepted therapy is found, upon rigorous study, to be no better (or worse) than what it replaced. Prasad and Cifu published an article stating that roughly 13% of articles making claims about medical practice in a single year of the New England Journal of Medicine constituted reversals.

We need to start thinking about how AI will handle reversals. When a medical practice has been standard for years and is then overturned by a handful of newer studies, the numerical balance of the literature favors the old practice. Cosine similarity retrieves the most textually similar query, not what represents the current best evidence. Recent work by Javadi et al. demonstrated this empirically: when retrieved documents contain contradictory evidence, RAG accuracy drops by up to 20%. Instead of the contradiction being surfaced to the user, it gets fused with the answer, leading to a drop in overall accuracy.

What a fix might look like

The solution is to stop treating retrieval as a single, final step and to start engineering the pipeline to account for evidence quality. A multi-stage approach could include:

recency weighting to prevent newer evidence from being drowned out,

relevance pruning to remove topically adjacent but clinically irrelevant results,

and a prioritization layer that weights by study design, sample size, and source credibility at both the journal and individual article level.

Ideally, the prioritization layer would contextualize what it retrieves, since metrics like absolute risk reduction carry different weights depending on clinical context. In other words, the same evidence hierarchy that every medical student learns in her first epidemiology course is applied programmatically to the retrieval step.

For example, if you are searching for information about percutaneous coronary intervention for stable angina soon after the publication of the Ischemia Trial, you would want the output to include more than aggregated results: it should surface the reversal, lay out the evidence on both sides, and anchor its recommendation in the strongest recent data.

But this is a research direction, not a deployed fix. So what can clinicians do today?

First, pay attention to the citations. If the tool is citing papers from 2011 and nothing from the last two years, that’s a signal.

Second, ask yourself whether the AI’s confidence matches your own. If the tool gives you a clean, unhedged answer on a topic you know is contested, that’s not reassurance. That’s a retrieval system that didn’t surface the debate.

Third, use the tool the way you’d use UpToDate ten years ago: as a starting point, not a stopping point.

Arthur C. Clarke observed that ‘sufficiently advanced technology is indistinguishable from magic,’ but a good clinician might try to distinguish it anyway.

Why this matters now

A growing number of AI-powered clinical decision support tools are entering physician workflows: OpenEvidence, UpToDate Expert AI, DoxGPT, etc. Though their architectures vary, most share a common structure: a commercial language model provides the reasoning, domain-specific fine-tuning shapes how the model handles medical information, and a retrieval layer determines which documents actually get seen. If that last step is biased, the reasoning inherits that bias.

The bottom line

This problem in AI retrieval systems is important well beyond the handling of reversals. Emerging infectious diseases, newly approved therapies, updated screening guidelines: any domain where the evidence is actively evolving is one where standard RAG is likely to lag. The attending who trained during the ‘go slow on sodium’ era can instantly update her priors when she reads a new study. The retrieval system and vector database cannot.

The practical takeaway is not to avoid these tools but to resist treating them as oracles. The hierarchy of evidence exists precisely because not all studies deserve equal voice, and our retrieval systems haven’t quite mastered that. It’s a solvable engineering problem, but until then, it’s important to recognize these shortcomings and keep a critical eye on the AI in your pocket.

Alex Knapp is a joint MBA/MS Biomedical Sciences student at the University of Chicago, where she studies how digital innovation in healthcare delivery can improve patient outcomes. In a previous life, she was an EMT, a big tech employee, and a consultant. She’ll be at a surgical robotics company this summer, working at the intersection of healthcare, AI/ML, and product strategy.

Excellent recognition and discussion of an important problem.

I wonder what Alex thinks would be the best approach to identify and correct her own bias that all medical students who study epidemiology choose female pronouns. (“the same evidence hierarchy that every medical student learns in her first epidemiology course”)

Case in point: I asked the same question of 2 different med AI programs and one cited evidence from 2017 or earlier; the other was updated appropriately. Check the sources.