The study in question is a randomized clinical trial looking at the Million Hearts Model. This model paid health care organizations to assess and reduce CV risk.

Obviously, this is an important goal. Heart disease, specifically, atherosclerotic vascular disease, is a leading killer of humans. Any reduction of heart disease should have a benefit on both a person and a population.

But paying health systems to do specific things is a policy intervention. Even though a policy, like this one, makes sense, policies can have benefits and potential harms.

(An example is the hospital readmissions reduction program (HRRP), which penalized hospitals for excess readmissions. This resulted in a fewer readmissions but it also associated with an increase in death rates in patients with heart failure.)

Both Andrew and I were happy that the nudging of Million Hearts was studied

The Trial and Program

This was a big pragmatic cluster randomized trial that ran over 4 years. More than 300 organizations were randomly assigned 1:1 to have the Million Hearts model or standard care.

There were two parts of the model. First there was $10 for every patient who had their 10-year risk calculated with a risk equation. (ACC/AHA is a simple one you can do in 15 seconds with a smartphone.) Then CMS paid each organization $0, $5, or $10 PBPM for each high-risk beneficiary with an annual risk reassessment, with monthly payment amounts dependent on mean risk score change across all of the organization’s high-risk beneficiaries reassessed.

Keep in mind that the only components of the risk calculation that are modifiable are cholesterol and blood pressure. (*smoking cessation for smokers).

Foy pointed out that Million Hearts was in many ways an incentive system to nudge providers, who then may nudge patients, to take more BP and cholesterol medicine.

The authors chose two primary outcomes: one a MACE endpoint with MI, stroke, and TIA. The second primary was the same as the first, plus CV death.

They originally planned to include only high-risk patients, but then added moderate-risk patients. This factored heavily in the results.

Patients were mostly 75 year-olds, men-women split 2/3rds, 1/3rd. Outcomes were derived from claims data—which is messy when it comes to judging MIs and TIAs and specific causes of death.

The Results:

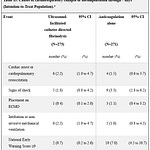

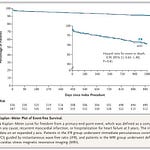

The first primary endpoint (MI, stroke, TIA) occurred at a rate of 14.8 per 1000 patient-years vs 17.0 per 1000 patient-years. The Hazard ratio came to 0.97 (90% CI - 0.93-1.0). The P-value was 0.09. (The authors had previously stipulated that the P threshold would be 0.10). The second primary, adding in CV death, was similar. A HR of 0.96 (90% CI 093-0.99) and a P = 0.02.

These are positive results. But let’s look further.

Drivers of the Results: The results were driven almost exclusively by moderate risk patients. Look at Table 3. Reductions in events rates were largest and significant statistically in the moderate-risk but not high-risk group.

That is something we have emphasized here at Sensible Medicine. Even though you would think that high-risk patients have the most to gain, they also have more competing risks and perhaps more chance for treatment harm. Like so many other studies, the sweet spot for primary prevention seems to be in the moderate-risk group.

Unintended Consequences: A second finding, noted by Andrew, was the highly significant increase in all-cause hospitalizations in the intervention group. These had the most significant p-values of the entire study.

Other Limitations:

The Million Hearts model randomization was offered to more than 500 organizations but only 342 accepted. This raises the question of generalizability. Were the 342 organizations special in some way?

Another factor is that outcomes were modeled on a sample of events—not raw counts.

The choice to use 90% confidence intervals rather than 95% confidence intervals and P thresholds of 0.1 rather than the more standard of 0.05 is a weakness. For instance, the first primary endpoint would have missed significance if this were evaluated in the usual fashion. I did not find a strong justification for this choice. Readers with statistical expertise, please weigh in.

Our Conclusions:

First, we were both happy that a policy was studied rather than just implemented because it made sense. This should serve as a model for future policy endeavors.

Second, there did look to be a modest effect on reducing important outcomes. And, these were driven mostly be moderate-risk (not high-risk) patients. This argues for a heterogenous treatment effect based on co-morbidity.

Third, the statistically significant increase in all-cause hospitalizations in the intervention arm suggests that more aggressive attempts at blood pressure and cholesterol levels may have risen the risk of off-target ill effects.

In the end, Andrew felt like the study was a wash. He did not feel strongly that the Million Hearts endeavor made a real difference.

Comments on our Audio— I think we misspoke about the patient years. We said per 100,000 patient years. It was 1000 patient years. I also think we misspoke about deaths being similar. It was actually slightly lower in the intervention arm.

Recall that Sensible Medicine remains a subscriber supported site. Thanks for your generous support. We are excited to bring you content that can’t easily be found elsewhere. I have an excellent recording to post soon on screening for atrial fibrillation.